During the development of this project, I was moving a critical system from .NET Framework to .NET 8 in a move to cloud initiative. The legacy system was not designed to be cloud-native and didn’t have the tests I spoke about in the Running Tracker project.

This new Web API was a part of the monolith responsible for retrieving from database all the negotiations made by a number of clients and confirmed in the B3 (Brazilian Stock Exchange), the data was processed to calculate taxes and commissions.

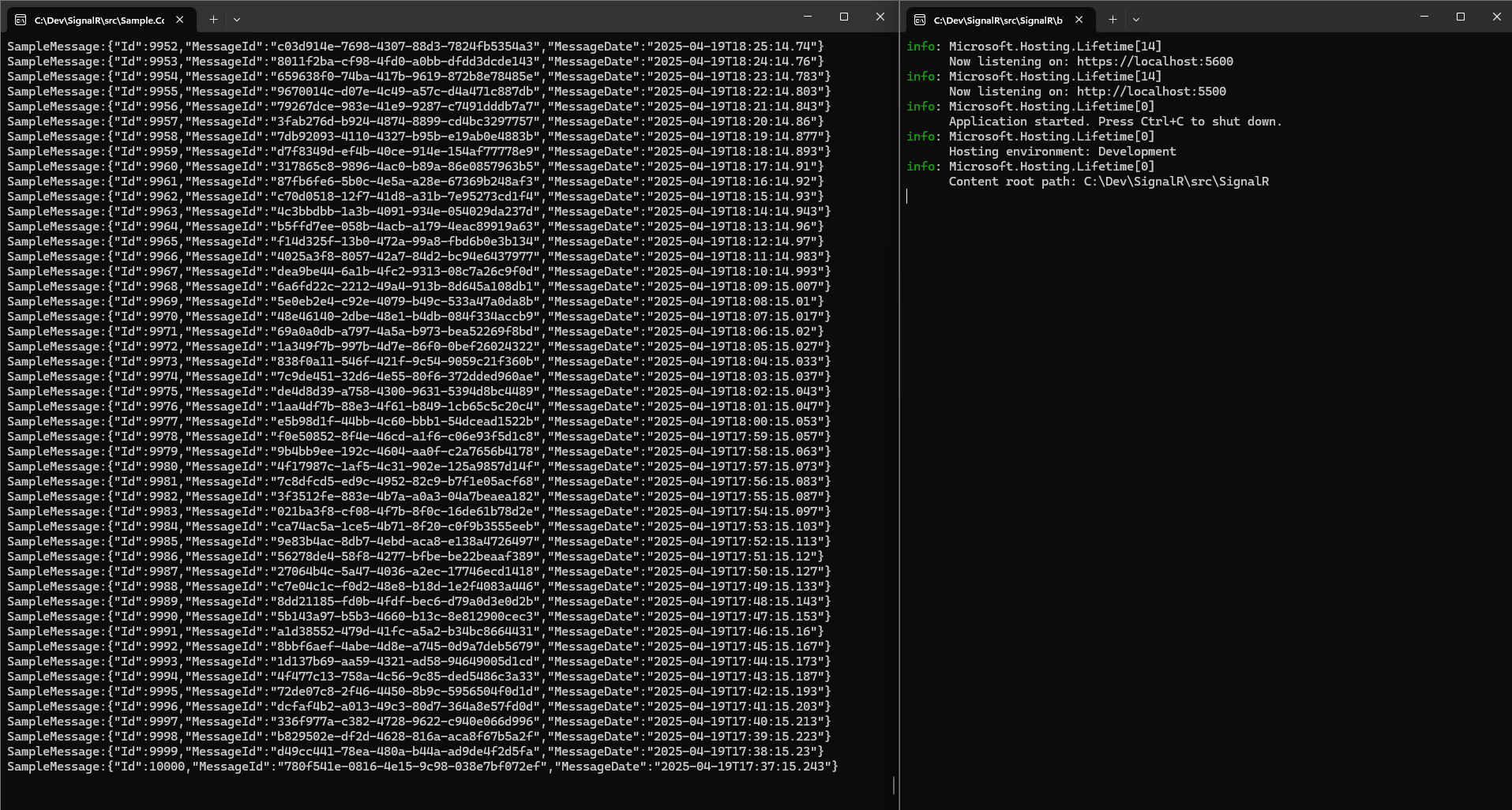

I implemented SignalR to send real-time data to one or more clients via WebSockets eliminating pagination overhead in a Web API and I optimized Dapper queries to stream SQL Server data efficiently, critical for high-volume financial systems.

This solution enabled real-time consumer processing. And it was built in a way that the data was being transmitted as received from database, the total operation in database could take some minutes to complete, so I decided to stream the data as it was being received:

public async Task<IEnumerable<SampleMessage>> StreamAllAsync([EnumeratorCancellation] CancellationToken cancellationToken = default)

{

const string sql = "SELECT Id, MESSAGE_ID AS MessageId, MESSAGE_DATE AS MessageDate FROM dbo.SAMPLE_MESSAGES ORDER BY Id";

var command = new CommandDefinition(

commandText: sql,

flags: CommandFlags.None,

cancellationToken: cancellationToken

);

return await _connection.QueryAsync<SampleMessage>(command).ConfigureAwait(false);

}

In the conclusion of the project, tests were implemented in production, halved calculation time to 10 minutes and the Web Api was designed for AKS deployment with CI/CD readiness, and Horizontal Scaling was implemented to handle the load of the requests. The system handled approximately 1 million records per day and the average time of calculation is 10 min avoiding pagination delays.

I was responsible for developing the Web Api that connected to database and streamed the data to the clients via SignalR.

If the application didn’t meet the speed and latency necessary I was ready to serialize the data. We considered RabbitMQ or Protocol Buffers for serialization but not required in the final solution.

The tech stack used is .NET 8, SignalR,Dapper, Docker Compose and SQL Server.

In the project repository you will find a more technical explanation and how to run the project and once it starts you will see the messages being streamed to the client and consumed in real-time.

Connect via LinkedIn to discuss real-time systems.